IN Brief:

- High-bandwidth memory is moving from roadmap slides into commercial shipment as AI compute demand accelerates.

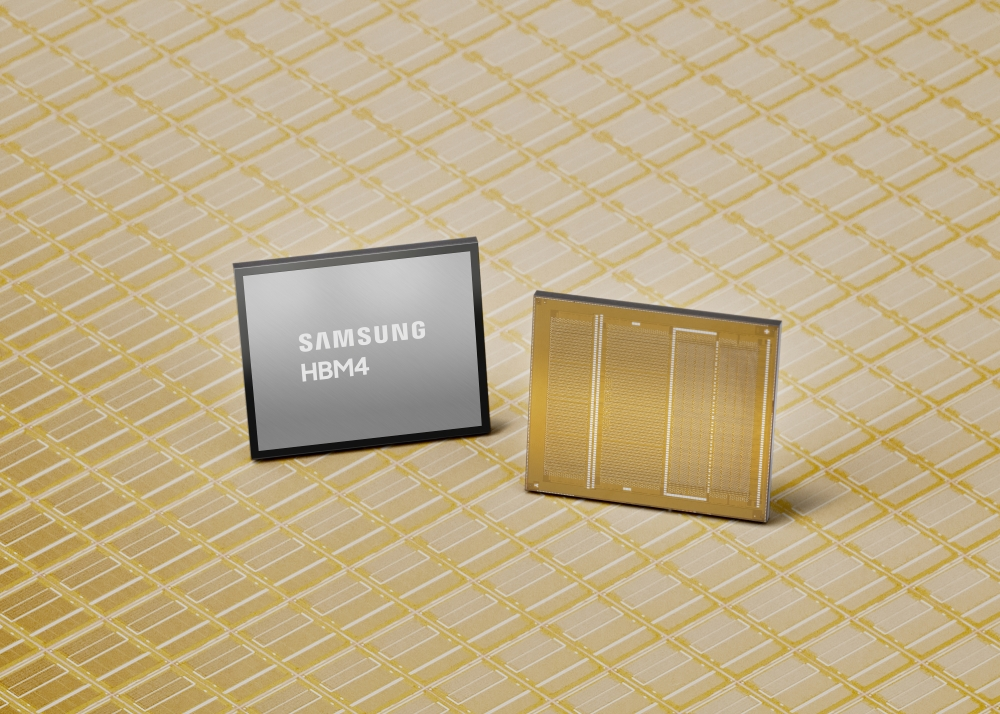

- Samsung’s HBM4 pushes pin speed, bandwidth, and efficiency using advanced DRAM and logic process nodes.

- Thermal, power, and packaging optimisation is becoming the competitive axis as I/O counts rise.

Samsung says it has begun mass production of its HBM4 high-bandwidth memory and has shipped commercial products to customers, positioning the company as an early mover as AI accelerators push memory bandwidth requirements beyond what HBM3E-class stacks can sustain.

Instead of carrying forward prior-generation design choices, Samsung says it moved HBM4 onto its sixth-generation 10 nm-class DRAM process (1c) and paired it with a 4 nm logic base die. “Instead of taking the conventional path of utilizing existing proven designs, Samsung took the leap and adopted the most advanced nodes like the 1c DRAM and 4nm logic process for HBM4,” said Sang Joon Hwang, Executive Vice President and Head of Memory Development at Samsung Electronics. “By leveraging our process competitiveness and design optimization, we are able to secure substantial performance headroom, enabling us to satisfy our customers’ escalating demands for higher performance, when they need them.”

On performance, Samsung specifies a consistent processing speed of 11.7 Gbps per pin, with headroom to reach up to 13 Gbps. The company frames 11.7 Gbps as roughly 46% above an 8 Gbps “industry standard,” and also cites a 1.22× increase over the 9.6 Gbps maximum pin speed of HBM3E. At the stack level, Samsung says total memory bandwidth per stack rises to a maximum of 3.3 TB/s, a 2.7× uplift compared to HBM3E.

Capacity options follow the familiar stacking cadence, with Samsung stating it will offer HBM4 in 24 GB to 36 GB capacities using 12-layer stacking, while planning to extend the range to 48 GB via 16-layer stacking aligned to customer timelines. That capacity-and-bandwidth combination is increasingly central to accelerator platform planning, where model sizes and batch throughput are often gated by memory traffic rather than raw compute.

Samsung is also addressing the less glamorous constraint that arrives with HBM4: pin counts and power. The company says HBM4 doubles data I/Os from 1,024 to 2,048 pins, and claims a 40% improvement in power efficiency versus HBM3E, using low-voltage through-silicon via (TSV) technology and power distribution network optimisation. Samsung also claims improved thermal behaviour, citing a 10% improvement in thermal resistance and a 30% gain in heat dissipation compared with HBM3E.

On manufacturing and supply, Samsung is linking HBM4 ramp to its DRAM capacity and in-house advanced packaging, alongside tighter design-technology co-optimisation between its foundry and memory organisations. The company also says it expects HBM sales to more than triple in 2026 versus 2025, with HBM4E sampling expected in the second half of 2026 and custom HBM samples planned to reach customers in 2027.

HBM performance has become a competitive headline, but the harder test will be delivery at scale — consistent stacks, predictable thermals, and reliable supply — as accelerator platforms standardise on the bandwidth levels HBM4 is now bringing into production.