IN Brief:

- ST is moving its sensor, MCU, and motor-control portfolio into NVIDIA’s physical-AI development stack.

- First deliverables pair Leopard Imaging hardware with ST sensing and Holoscan Sensor Bridge, while Isaac Sim gains a high-fidelity ST IMU model.

- The collaboration targets faster sim-to-real development by standardising data capture, timing, and device models across robotics platforms.

STMicroelectronics is taking a broader position in robotics by moving beyond component supply and into the reference stack that developers use to build physical AI systems. Its latest collaboration with NVIDIA brings ST sensors, microcontrollers, and motor-control hardware into the Holoscan Sensor Bridge and Isaac Sim ecosystems, tightening the handoff between real-world sensing, simulation, and deployment.

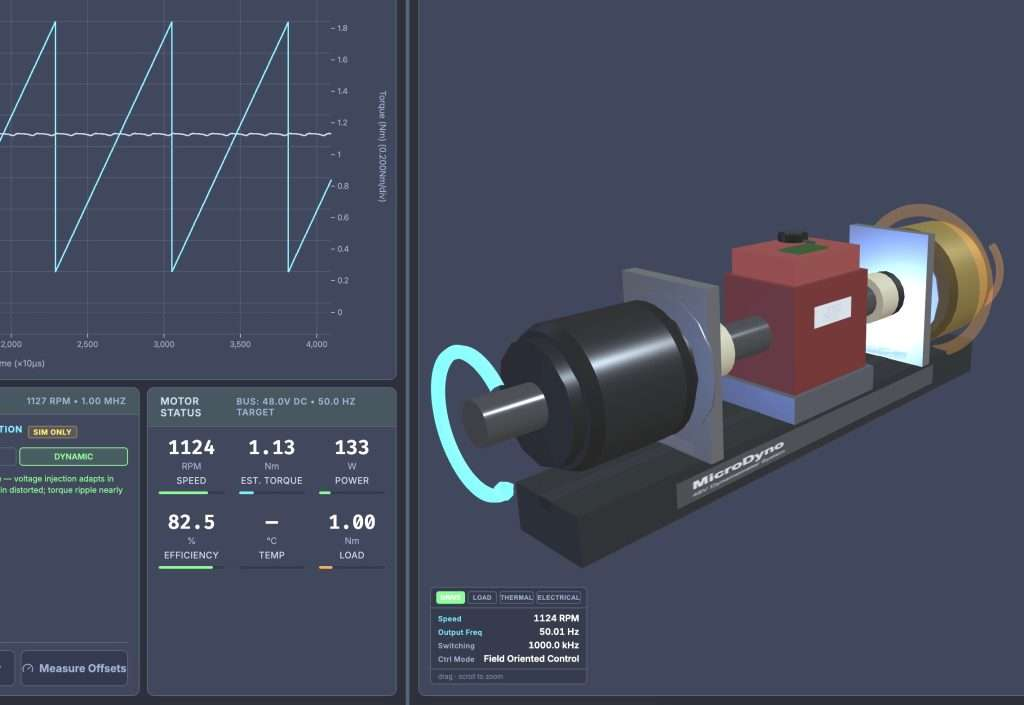

The first pieces already in circulation show where the partnership is heading. ST and Leopard Imaging have introduced a multi-sensor module for humanoid and other advanced robots that combines VB1940 image sensors, the LSM6DSV16X IMU, and the VL53L9CX direct ToF LiDAR, while integrating with NVIDIA Jetson and Isaac through Holoscan Sensor Bridge. ST has also added a high-fidelity IMU model to Isaac Sim, giving robotics teams a calibrated device representation earlier in the development cycle.

That matters because the bottleneck in robotics is rarely just model training. Sensor synchronisation, data logging, board-level integration, and simulation fidelity all shape how quickly a controller can move from lab behaviour to reliable field behaviour. ST’s technical detail around the announcement points to Holoscan Sensor Bridge running as a sensor-over-Ethernet link with hardware-accelerated networking, and even to proof-of-concept support on STM32H7 microcontrollers for lower-jitter connections between sensing, actuation, and GPU compute.

In practical terms, ST is trying to reduce the amount of custom interface work developers have to build around cameras, IMUs, ToF devices, and motor subsystems before meaningful robotics work can begin. Bringing those devices into NVIDIA’s preferred workflow also increases the likelihood that ST parts become regular building blocks for humanoid, industrial, and service robot platforms where teams want one path from simulation to Jetson deployment.

The sim-to-real gap is not disappearing, but the tooling around it is becoming more standardised. ST’s latest physical-AI updates suggest the next phase will be less about isolated components and more about reference-ready subsystems built to move through simulation, validation, and deployment with fewer bespoke integration steps.