IN Brief:

- At GTC 2026, Innodisk and Aetina are showing edge AI systems that pair high-end NVIDIA compute with deployable industrial hardware.

- The healthcare demo runs a Medical VLM locally on an APEX-X200 system using an NVIDIA RTX PRO 6000 Blackwell Server Edition GPU.

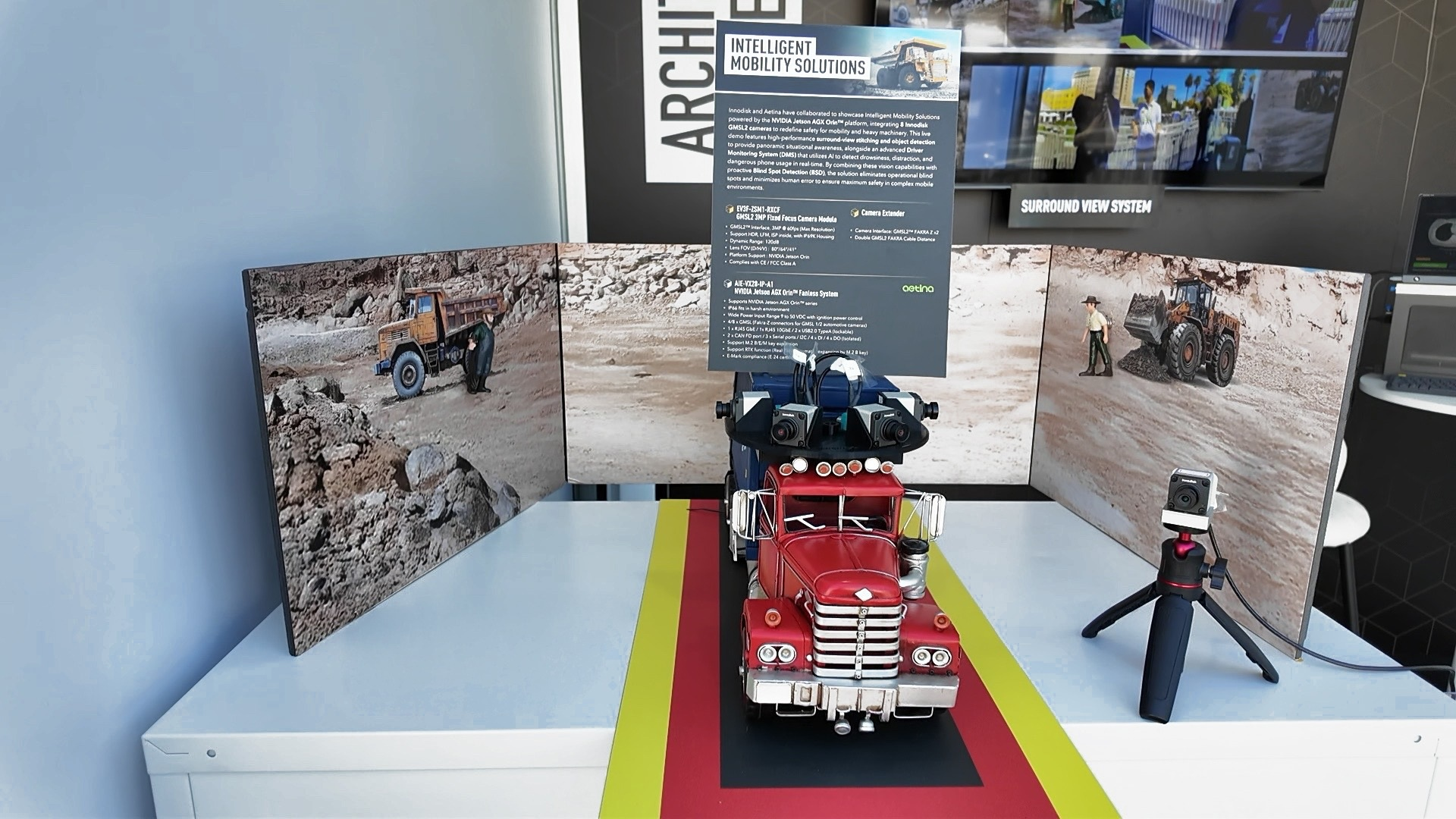

- A parallel mobility platform uses Jetson AGX Orin, custom capture hardware, and eight GMSL2 cameras for surround perception, blind-spot detection, and driver monitoring.

Innodisk is using NVIDIA GTC 2026 to make a familiar point in edge AI, but with more convincing hardware behind it: the bottleneck is no longer model availability, but system integration. Alongside subsidiary Aetina, the company is showing two field-ready stacks that move beyond AI demo culture and into the less glamorous work of packaging compute, vision, I/O, and software into something that can survive deployment.

The headline healthcare system is a Medical Multimodal Vision Language Model running on Innodisk’s APEX-X200 edge AI platform. The machine is built around NVIDIA Blackwell architecture and TensorRT, and the published configuration puts an RTX PRO 6000 Blackwell Server Edition GPU inside a 16.5-litre chassis. Innodisk says the system can analyse X-ray and CT images locally, generate draft diagnostic reports, and turn findings into patient-facing explanations without sending workloads back to the cloud. That local inference model is becoming easier to justify in clinical environments, where latency, bandwidth, and data governance all matter at once.

The mobility demonstration shifts the same integration logic into a much harsher environment. Innodisk and Aetina have built an intelligent vehicle platform around Jetson AGX Orin, with a custom capture card linking eight GMSL2 camera modules into a single system. The stack supports surround-view stitching, active blind-spot detection, and an AI driver monitoring system aimed at fatigue, distraction, and phone use. Innodisk’s wider GMSL2 portfolio gives that story more depth, because the camera modules are built for long cable runs, HDR imaging, and ruggedised operation rather than lab-bench conditions.

What gives the showcase some weight is that Innodisk is not only talking about compute density. It is also dealing with the plumbing that usually slows projects down: camera synchronisation, interface adaptation, rugged packaging, thermal management, and the mechanics of turning multiple data streams into usable inference at the edge. “Through close collaboration with NVIDIA and two decades of vertical market expertise, we help customers cut through integration complexity and accelerate their journey from advanced algorithms to field-ready solutions,” said Randy Chien, Chairman of Innodisk Group.

The result is a more credible picture of what edge AI deployment now looks like in practice. In healthcare, the emphasis is privacy-preserving local inference in a compact system. In mobility, it is sensor fusion and real-time safety functions under real environmental constraints. Innodisk is exhibiting at NVIDIA GTC 2026 in San Jose through March 19, with Innodisk at Booth #7039 and Aetina at Booth #139.