IN Brief:

- Navitas has introduced an 800V-to-6V DC-DC power delivery board that removes the traditional 48V intermediate bus stage in AI server racks.

- The design targets 96.5% peak efficiency, 1MHz switching, and 2,100W/in³ power density using GaN on the primary side and an ultra-low-profile layout.

- The launch reflects a broader shift towards 800VDC architectures as rack power rises, conversion losses become more visible, and board area becomes part of the compute equation.

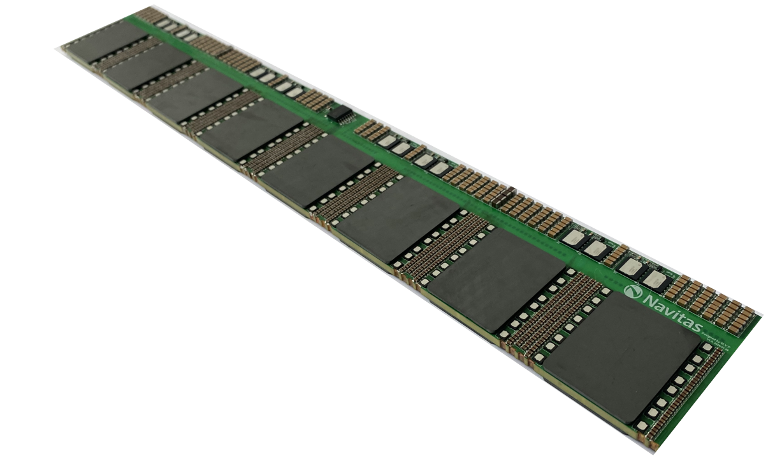

Navitas Semiconductor has introduced an 800V-to-6V DC-DC power delivery board designed to remove the traditional 48V intermediate bus stage from high-density AI server racks. The company says the new board, shown at NVIDIA GTC 2026, delivers direct conversion from 800V to 6V in a single power stage using its GaNFast technology, targeting a simpler power chain for future high-performance computing platforms.

According to Navitas, the board is designed for up to 96.5% peak efficiency at full load, switches at 1MHz, and reaches a power density of 2,100W/in³. The company also describes the assembly as an ultra-low-profile design, around 20% thinner than a mobile phone, allowing it to sit closer to the GPU board and reduce losses associated with power distribution and layout overhead. On the primary side, the design uses sixteen 650V GaNFast FETs in a stacked full-bridge arrangement, with 25V silicon MOSFETs at the centre-tapped outputs.

The headline figure is the removal of the intermediate 48V stage, which has long been a standard step in server power architectures. In conventional layouts, high-voltage input is first converted to an intermediate bus and then stepped down again to the voltages required by voltage regulator modules feeding processors, accelerators, and memory. That structure has worked well for years, but it becomes harder to justify as rack power climbs and every conversion stage adds loss, heat, volume, and component count.

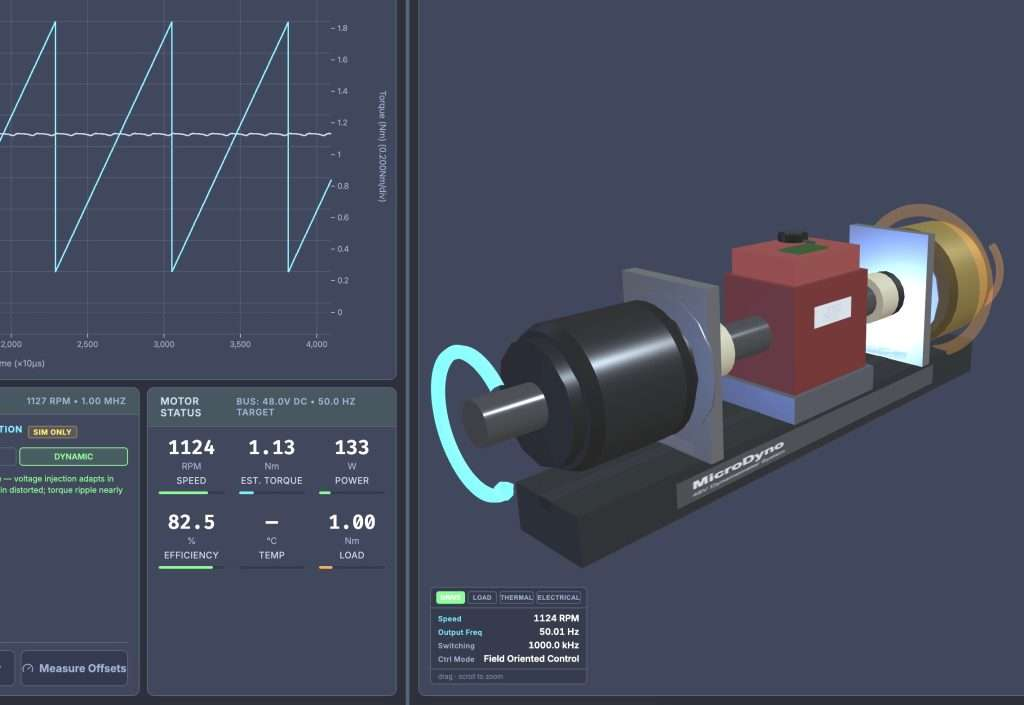

That pressure is intensifying quickly in AI infrastructure. GPU-heavy systems are driving rack densities upwards, and power delivery is moving from a supporting role to a first-order design constraint. NVIDIA has already outlined an 800VDC architecture for next-generation AI data centres, arguing that higher-voltage distribution can reduce current, cable bulk, copper demand, and total conversion stages as power levels rise. In that context, Navitas is not simply launching another converter board. It is positioning itself within a broader architectural shift in which electrical distribution, board area, and compute density are increasingly tied together.

The engineering appeal of direct 800V-to-low-voltage conversion is easy to see. Fewer stages can mean lower loss, less wasted space, and fewer opportunities for thermal bottlenecks to accumulate across the chain. Navitas also argues that stepping directly to 6V halves the conversion ratio that downstream VRMs must handle, which can improve the final stage feeding fast-changing accelerator loads. In dense systems where transient response and board real estate both matter, that is a meaningful claim.

None of that makes the design problem simple. High-frequency conversion at these voltages still places heavy demands on switch technology, magnetics, control, packaging, and thermal management. Moving closer to the load helps, but it also tightens layout constraints and raises expectations for reliability. AI hardware has an unusual way of exposing every weak link in the power chain, because utilisation profiles, transients, and thermal load can all shift abruptly under real workloads rather than in neat lab conditions.

Even so, the launch captures where power electronics is moving. Gallium nitride has already established itself in chargers, adapters, and certain server and industrial power stages, but the next step is deeper system-level deployment where it changes architecture rather than just improving an existing block. That is where the most meaningful design shifts tend to happen. Once the conversation turns from device substitution to stage elimination, the power stack itself starts to change shape.

There is also a wider ecosystem effect. Texas Instruments has separately outlined an 800VDC power architecture for future AI data centres with NVIDIA, and the emerging message is that power conversion is becoming part of the competitive battleground in AI infrastructure, not a hidden support function. As compute platforms push towards megawatt-class racks and denser accelerator trays, the efficiency gains available in power delivery are too large to ignore and too expensive to leave to legacy architectures.

For engineers watching beyond the data-centre sector, the implications are still worth noting. Industrial and embedded systems are not about to adopt 800V rack power wholesale, but the underlying lessons on conversion density, stage reduction, packaging, and power-path optimisation will travel. Navitas’ new board is an AI-infrastructure product first, yet it also signals how quickly power architecture is being redrawn when compute demand moves faster than the assumptions beneath it.