IN Brief:

- NVIDIA has launched open AI models for quantum processor calibration and error-correction decoding.

- The Ising family combines a 35B vision-language model with 3D CNN decoder models.

- Quantum progress is increasingly becoming a control, software, and accelerated-compute problem as much as a hardware one.

NVIDIA has launched Ising, an open family of AI models designed to tackle two of quantum computing’s most persistent engineering problems: processor calibration and real-time error-correction decoding. The release brings together a 35-billion-parameter vision-language model for calibration tasks and a pair of 3D CNN-based decoder models aimed at improving speed and accuracy in quantum error correction.

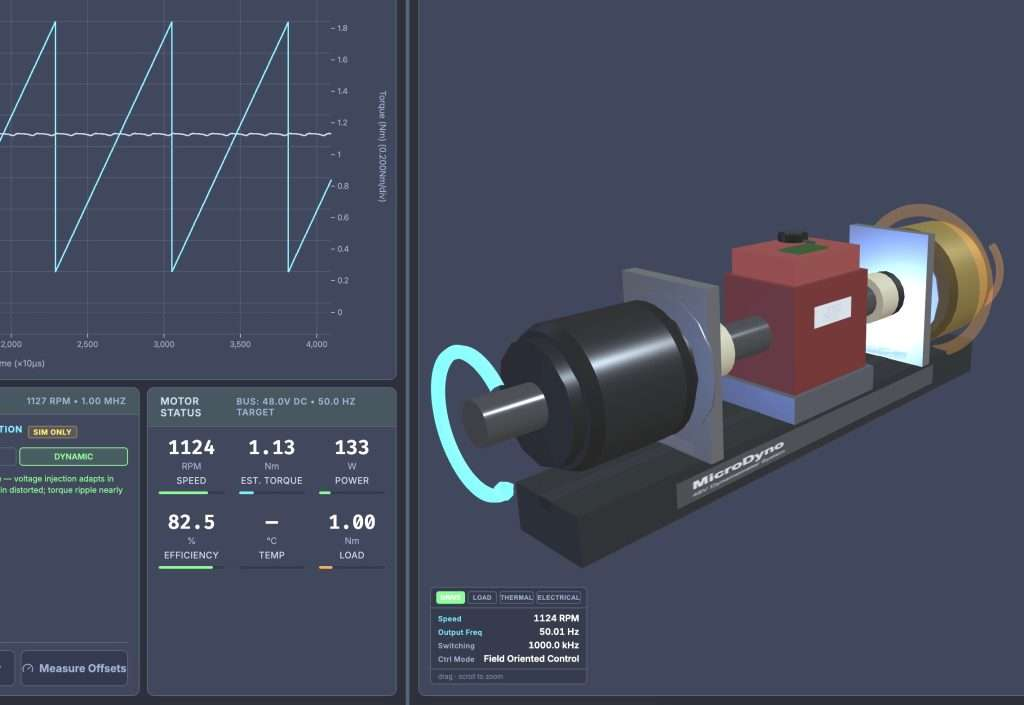

The company said Ising Calibration was trained on data spanning multiple qubit modalities, including superconducting qubits, quantum dots, ions, neutral atoms, and electrons on helium. NVIDIA also introduced QCalEval, a benchmark for calibration workflows built around real quantum computer outputs. On that benchmark, the company said Ising Calibration 1 outperformed several large general-purpose models on average. The broader point is that quantum control is starting to demand domain-specific AI rather than generic language models bolted onto lab tooling after the fact.

The Ising Decoding side of the release is more closely tied to runtime operation. NVIDIA said the new framework lets teams train compact 3D CNN pre-decoders for rotated surface-code error correction, with base models tuned either for speed or for higher logical-error-rate improvement. In one example, the company reported that the fast pre-decoder paired with PyMatching ran 2.5 times faster than PyMatching alone and was 1.11 times more accurate at a specific code distance and physical error rate. For the more accurate model plus PyMatching, NVIDIA reported 2.33 microseconds per round and a 1.53 times improvement in logical error rate in its published setup.

The significance of the launch is that it shifts attention back to the classical side of quantum systems, where much of the real engineering bottleneck now sits. Qubits remain fragile, and better hardware alone does not solve the operational problem of calibrating, monitoring, and correcting them in real time. If quantum systems are to scale, they need control and correction layers that are both low-latency and highly adaptable to specific hardware noise patterns. That makes AI inference, GPU acceleration, and deployment tooling part of the quantum stack rather than adjacent support functions.

There is also an open-tooling angle here that could matter to developers. NVIDIA is packaging Ising not simply as a benchmark demo, but as a set of base models, training frameworks, and deployment workflows that laboratories and hardware builders can adapt to their own systems while keeping proprietary processor data on-site. That approach fits the current state of the sector. Quantum hardware remains fragmented across modalities, and there is no obvious single control architecture that serves every platform equally well. Open models that can be specialised at lab level may prove more useful than monolithic software stacks that assume a single route to scale.

For electronics and compute engineers, the release is another reminder that the path to useful quantum systems will run through heterogeneous architectures. Quantum processors, GPUs, real-time interconnects, software frameworks, and control electronics are becoming more tightly coupled, not less. In practical terms, that means the boundary between processor design, accelerator design, and instrument control is getting harder to draw. NVIDIA’s Ising launch does not settle the question of which quantum hardware will win. It does make one point clearer: the classical infrastructure around the qubits is becoming a strategic design problem in its own right.