IN Brief:

- Mythic is betting its next APU generation on embedded non-volatile memory that can hold more analog states per cell.

- SST’s memBrain pairs SuperFlash process availability with 8-bit-per-cell storage, low read current, and long retention for compute-in-memory.

- The decision points to a bigger issue in analog AI: manufacturable memory technology is becoming as strategic as the compute architecture itself.

Mythic has chosen memBrain technology from Microchip’s SST unit as the embedded memory foundation for its next-generation analog processing units, and the decision says as much about manufacturability as it does about raw efficiency. Mythic says the pairing will deliver 120 TOPS/W for AI inference, using SuperFlash-based non-volatile bitcells to store more of the model directly where computation happens.

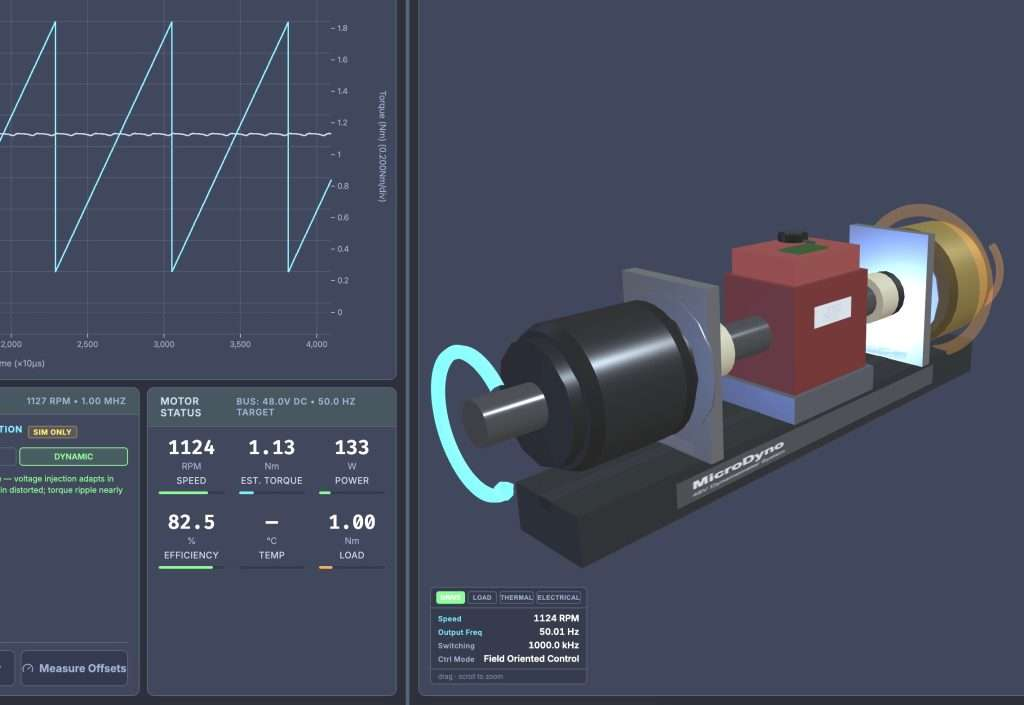

That architecture is central to Mythic’s broader direction. The company has been building its APU line around analog compute-in-memory, where weights are held inside the compute fabric rather than moved constantly across separate memory hierarchies. Its current M1076 device already targets up to 25 TOPS in a single chip for edge AI, and the new memory partnership extends that approach toward larger edge and data-centre inference designs.

SST’s memBrain cell brings several traits that fit that roadmap: up to eight data bits per bitcell, single-digit nanoamp read current, 10-year data retention, and 100,000 endurance cycles. Microchip also says the technology has already been developed in 40 nm and 28 nm foundry processes using production-ready SuperFlash, with 22 nm development planned, which is important in a segment where impressive lab results do not automatically translate into foundry-ready silicon.

There is also a supply-chain dimension. Microchip says 150 billion units of the licensed SuperFlash technology have shipped to date and that the platform is licensed across all top-ten semiconductor foundries. For Mythic, that broad process availability may matter nearly as much as the headline 120 TOPS/W figure, because it lowers the risk that a memory choice becomes a scaling bottleneck once designs move beyond prototype volumes.

The larger signal is that analog AI is entering a more disciplined phase. Architectural novelty still matters, but the companies most likely to ship at volume will be the ones that can pair compute-in-memory ideas with memory cells, process options, and tool flows that semiconductor customers can actually qualify.