IN Brief:

- Texas Instruments has launched two MCU families with integrated TinyEngine neural processing unit acceleration for edge AI inference.

- The MSPM0G5187 Cortex‑M0+ MCU targets low-cost and battery-powered devices with AI capability at sub‑$1 pricing.

- The AM13Ex family combines real-time motor control with AI acceleration for adaptive control and predictive functions in industrial systems.

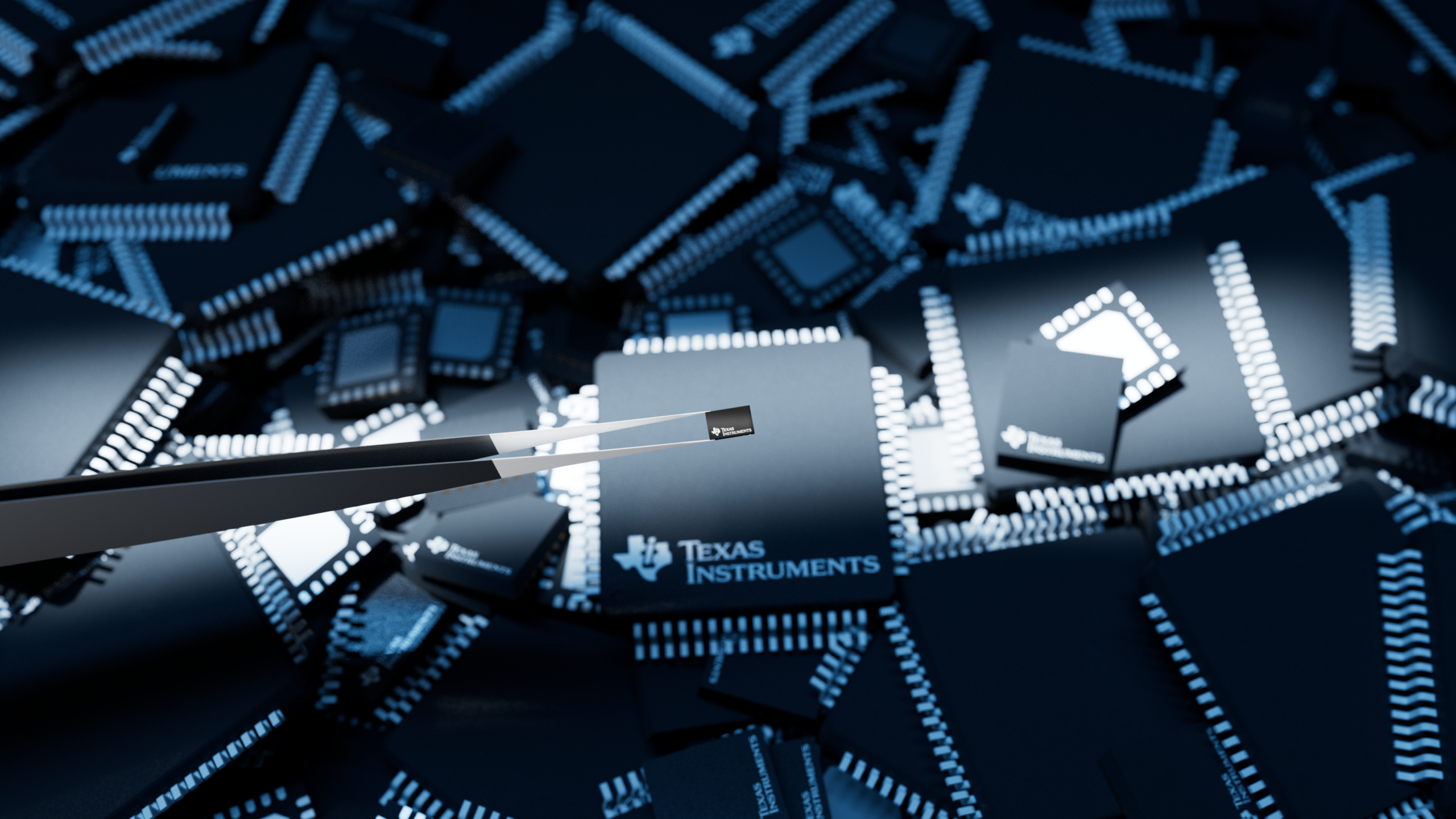

Texas Instruments has introduced two microcontroller families designed to bring edge artificial intelligence capabilities into smaller embedded systems. The MSPM0G5187 and AM13Ex MCUs integrate the company’s TinyEngine neural processing unit (NPU), providing hardware acceleration for AI inference workloads directly on the microcontroller.

The MSPM0G5187 device targets cost-sensitive and resource-constrained designs. Built around an Arm Cortex‑M0+ core, the MCU includes the TinyEngine NPU to accelerate neural network operations locally while the main CPU runs application code. This architecture allows embedded systems to perform AI inference without relying on external processors or cloud connectivity.

According to Texas Instruments, the NPU can reduce latency by up to 90 times and lower energy consumption per inference by more than 120 times compared with similar microcontrollers that rely solely on CPU processing. The hardware accelerator also reduces the flash memory footprint required for AI workloads, enabling AI functionality within smaller and battery-powered systems.

The MSPM0G5187 is aimed at applications such as wearable devices, smart appliances and building systems where local intelligence can support features including condition monitoring, gesture recognition and anomaly detection. The device is priced below US$1 in 1,000-unit quantities, positioning it as an entry point for deploying AI inference in cost-sensitive electronics.

Alongside the low-power MCU, TI also introduced the AM13Ex family for industrial and motor-control designs. These devices combine a high-performance Arm Cortex‑M33 core with the TinyEngine NPU and a real-time control architecture. The integration allows designers to implement adaptive motor-control algorithms and predictive functions while maintaining precise control loops.

The AM13Ex devices can manage control loops for up to four motors while running AI-based algorithms for tasks such as load sensing and energy optimisation. An integrated trigonometric math accelerator enables calculations up to 10 times faster than conventional CORDIC-based approaches, improving responsiveness in control applications.

The new MCU families are supported by TI’s development ecosystem, including Code Composer Studio and CCStudio Edge AI Studio. The toolchain provides model selection, training and deployment capabilities across TI embedded processors, with more than 60 AI models and application examples available for developers.

Together, the hardware and software releases are intended to simplify the deployment of edge AI capabilities across embedded devices ranging from low-power consumer electronics to industrial automation systems.