IN Brief:

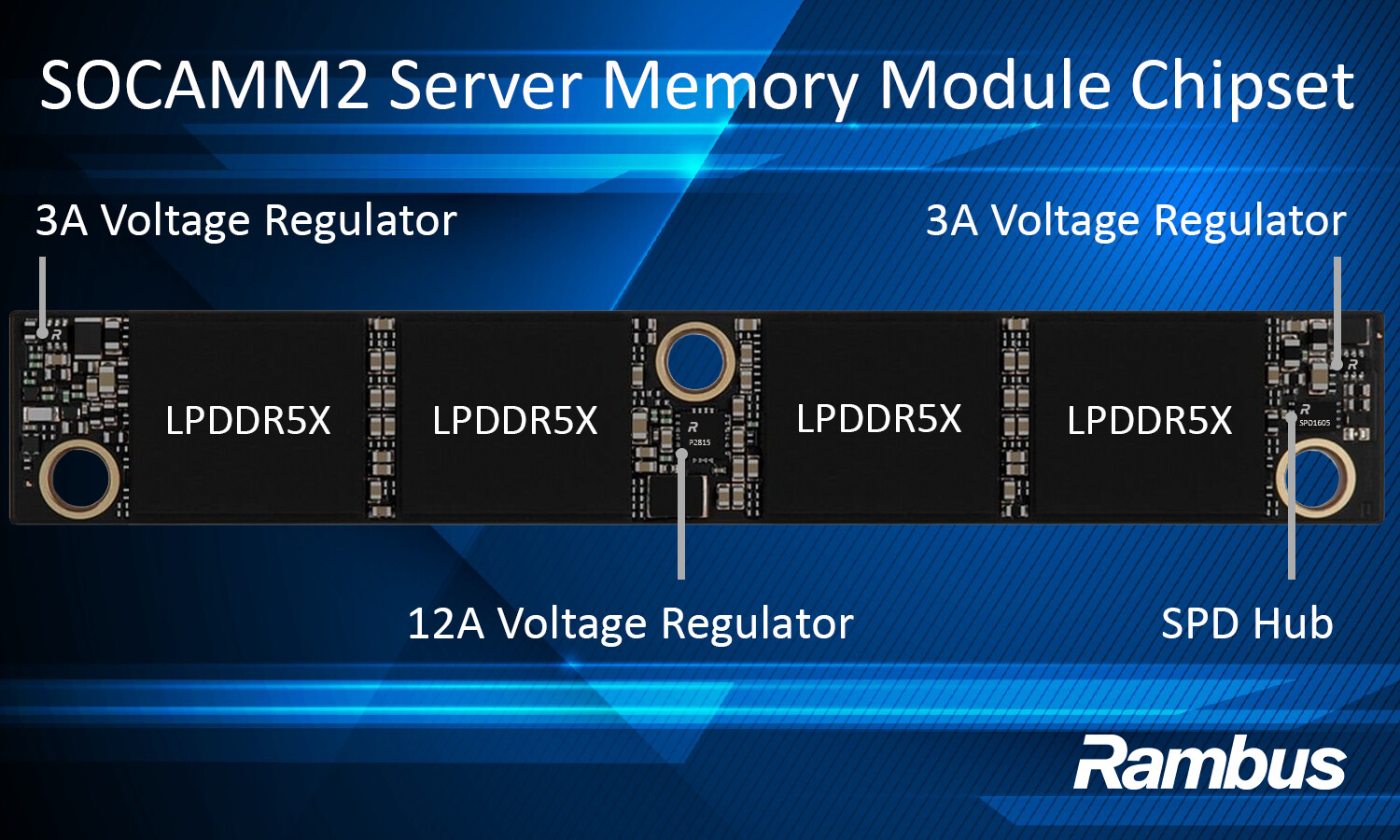

- Rambus has launched a SOCAMM2 chipset for LPDDR5X-based AI server memory modules.

- The chipset combines voltage regulators with an SPD hub that includes temperature sensing and I3C telemetry.

- AI servers are forcing a rethink of memory architecture around bandwidth, serviceability, power, and board area.

Rambus has introduced a SOCAMM2 server module chipset designed to enable LPDDR5X-based memory modules for AI server platforms, adding local power and telemetry functions around an emerging form factor that aims to combine LPDDR efficiency with server-class modularity.

The chipset includes 12A and 3A voltage regulators alongside an SPD hub with an integrated temperature sensor, supporting module identification, configuration, thermal telemetry, and localised power conversion. Rambus says the solution is designed for JEDEC-standard SOCAMM2 modules operating at up to 9.6Gb/s, giving AI server designers a route to low-power memory modules that can be installed and serviced more like conventional server memory rather than being soldered permanently to the board.

That distinction is central to the appeal of the format. LPDDR has long offered attractive efficiency and bandwidth-per-watt characteristics, but its role in servers has been constrained by its usual deployment model. Soldered-down memory can work well in tightly optimised systems, but it reduces serviceability, narrows upgrade options, and complicates manufacturing and repair in larger infrastructure deployments. SOCAMM2 is intended to bridge that gap by placing LPDDR-class memory in a compact, replaceable module suited to next-generation AI systems.

The Rambus chipset provides the supporting functions that make that module concept workable. Local voltage regulation improves power delivery efficiency and more granular control over supply currents at the module, while the SPD hub and integrated temperature sensor provide configuration and telemetry functions needed for server operation. In practical terms, the chipset turns SOCAMM2 from a packaging concept into something closer to a deployable server memory subsystem.

AI infrastructure is changing how memory choices are judged. Compute remains expensive, but memory bandwidth, capacity, thermal behaviour, and power consumption are shaping system architecture just as strongly. HBM addresses the highest-performance tier, yet not every workload or system budget can live there. Standard server DIMMs do not always offer the power profile or board-area efficiency demanded by new accelerator-heavy designs.

That opens space for alternatives that sit between soldered LPDDR and conventional DIMM architectures. Data-centre operators want more bandwidth and capacity per watt, but they also want modularity, repairability, and a supply chain that does not force every memory decision into a board-level commitment. Removable LPDDR5X modules begin to address that requirement, particularly in AI systems where scaling memory often creates as many thermal and power challenges as it solves.

Telemetry is becoming more important at the module level as well. Server memory is no longer a passive element hidden behind the processor’s memory controller. Thermal awareness, local regulation, and configuration visibility are becoming more important as modules run harder and sit closer to system-level optimisation loops. That aligns with a wider trend across data-centre electronics, where more of the hardware stack is becoming measurable and more tightly linked to platform management software.

SOCAMM2 is still at an early stage, but the direction is becoming clearer. If LPDDR-based modules are to move into AI infrastructure in a meaningful way, they need a chipset ecosystem that handles power, identification, telemetry, and interoperability cleanly. That supporting layer will shape adoption just as much as the memory devices themselves.

The launch stands out because it points beyond a single component family. It reflects a broader shift in which memory modules for AI systems are being judged on bandwidth and density, but also on serviceability, efficiency, and operational visibility. That is a different design brief from the one that shaped earlier server memory generations.